Category Archives: Flash CS5

FITC Amsterdam 2011 was great!

Posted by on 12 March , 2011

I was very happy when Shawn Pucknell asked me to do a session at FITC Amsterdam half a year ago. It was already 2 years ago that I spoke at FITC so I was more then ready for it !!

So last monday we left Belgium driving to Amsterdam (2.5 h drive) for another great FITC event !

We we arrived at the venue to do some tech testing on Monday, the first thing that I realised was that the venue was really really big if I compare it to the previous venue from last years! Maybe the new venue is just a little bit tooo big …

But as always at FITC events, the atmosphere is really crazy and the fun at the parties is great ! This year I also hang out a lot in the brand new Influxis voodoo lounge which had a lot of very cool sessions aaaand FREE Beers 🙂

On Tuesday around noon, I did a duo session with my Happy Banana partner Wouter Verweirder and we decided to do a talk about the interaction possibilities that the Flash Platform has to offer. Because we both had a lot of client work to do last weeks, we still had to really finalize the demos of our presentation the weekend before the conference and actually also the night before the presentation (actually we didn’t sleep at all the night before).

We wanted to tell a story that gives an overview of the different input methods for a Flash Platform project; going from sound, to camera, images, to mobile devices as controllers and also MS Kinect.

Some Kinect demos of our presentation were filmed by @_Driezzz and it gives you and idea how much fun we had during the session ! For this demos we used AS code to combine AS3TUIO with OSCeleton, so we can translate skeleton joint information and use skeleton joins as touch points. This way, you can use the standard multitouch events. This code is ready for you to be downloaded at wouter’s blog if you want to try it out yourself: blog.aboutme.be . Let us know if do something cool with it 🙂

Here is the presentation of the session :

FITC was really great to meet up with a lot of my flash community friends. I also met a lot of new cool people which I hope to see again somewhere in conference land ;-).

I hope everybody liked the session, feel free to give some feedback and cu all soon!

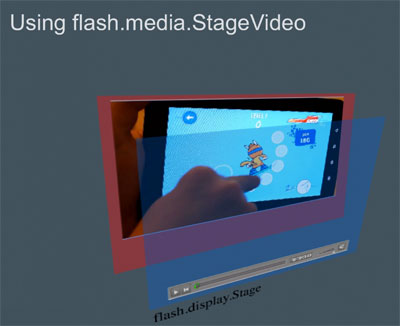

Flash Player 10.2 beta out now – Stage Video rocks !

Posted by on 1 December , 2010

Adobe is happy to announce a beta release of Flash Player 10.2 for Windows, Mac, and Linux. It is now available for download on Adobe Labs. Flash Player 10.2 beta introduces a number of enhancements , including Stage Video, a new API that delivers best-in-class, high performance video playback across platforms. The new beta also includes Internet Explorer 9 hardware acceleration support previewed earlier (in Flash Player “Square”), enhanced text rendering, and two popular requests from the community: a native custom mouse cursors API and support for full screen playback with multiple monitors.

The video about Stage Video during Adobe MAX a few weeks ago:

Just to let you understand why this update is really important…

Facts and Figures about the Flash Player for the web and mobile :

- 75% of all video on the web is viewed with Flash Player.

- Flash Player is on 99% of all connected PCs.

- 85 % of 100 top websites use Flash

- 95% of top 20 phone OEMs will deliver Flash

Stage Video

I saw it myself at Adobe MAX during the sneak peaks and it was really amazing to see such a performance update. Now it is already available for everybody to play with. To summarize stage Video stands for:

- smoothest, highest quality, seamless video

- lowest CPU usage, longest battery life

- optimized for multi-screen: PC, smartphone, tablet, television

- reaching also out to low-end PC devices

- API compatible with Flash Player 10.X

- no changes needed beyond updated SWF and wmode

Stage Video is already used on AIR for TV and Google TV and is a new way to present video to users. I encourage everybody to use StageVideo from now on 🙂

Linking and liking:

Read the blog post about FP 10.2 by Thibault Imbert about it.

Get it here: http://labs.adobe.com/technologies/flashplayer10/

Tutorial: Getting started with StageVideo on Adobe Developer Connection

Adobe AIR Mobile: Application performance optimization on Android

Posted by on 13 November , 2010

When we talk about mobile development, we have to make sure our application is optimized as good as possible to run smooth on a wide variety of devices. Like Kevin Lynch said at MAX 2010, mobile development is like desktop/web development 7 years ago. Let’s take a look on how we can make sure that our mobile applications run smooth. I can already tell you that always a lot of testing (starting early in your development process) on different devices will be necessary to achieve the results you want.

When your application slows down on a device, it means that your code execution per frame or the rendering per frame or both is the bottleneck.

What to display in the DisplayList ?

Always make sure you use the right DisplayOject for the right job. As you know, a MovieClip uses more bytes then a Sprite and Sprite uses more bytes than a Bitmap…etc. When building up your DisplayList always keep in mind that the DisplayList really affects the memory usage of your application. If you have a set of Sprites on your stage for example, you can draw them in a BitmapData object and put that in a bitmap on the DisplayList. That approach will save some memory for sure!

When we talk about Bitmaps, we have to say something about the 2 Flash player render operations also. In the Flash Player every graphical element in your application is rasterized inside a bit/pixel buffer (=rasterizing) and those tiles of pixels are then arranged to make up your scene (=scene compositing) in the main pixel buffer (what you finally see on the device). Every frame, the player calculates a dirty region (=the redraw regions) to see what must be rasterized again and merged again in the main pixel buffer. All those tasks are done by the CPU when doing web/desktop development but with Adobe AIR 2.5 targeting mobile devices, you can choose if the CPU or the GPU needs to do those important tasks.

CPU/GPU rendering

At this moment on IOS, a ‘special’ GPU mode is only supported, it is called GPU Blend. This means that the task of the the creation of the different pixel buffers is done by the CPU and then the CPU sends it to the GPU. The GPU finally does the scene compositing.

On Android, when you use GPU rendering, this is called GPU Vector, the tasks are done fully by the GPU. So, the creation of individual pixel buffers and the scene compositing, it all happens at the GPU. This can give you a huge performance boost. But can we make sure we are using GPU rendering?

That is where bitmap caching comes in.